Is your AI fit for healthcare?

Powerful AI tools are rapidly reaching millions of consumers — an exciting but complex trend, especially in the high-stakes world of healthcare. General AI models can be helpful for organizing and making sense of scattered medical information. But when it comes to health, patients need a system that offers high quality, clinically informed guidance, in order to truly make a difference. As these tools evolve from passing exams to interpreting years of personal health history, we must ask: are general purpose AI tools ready to navigate the complexities of human health?

AI purpose-built for healthcare

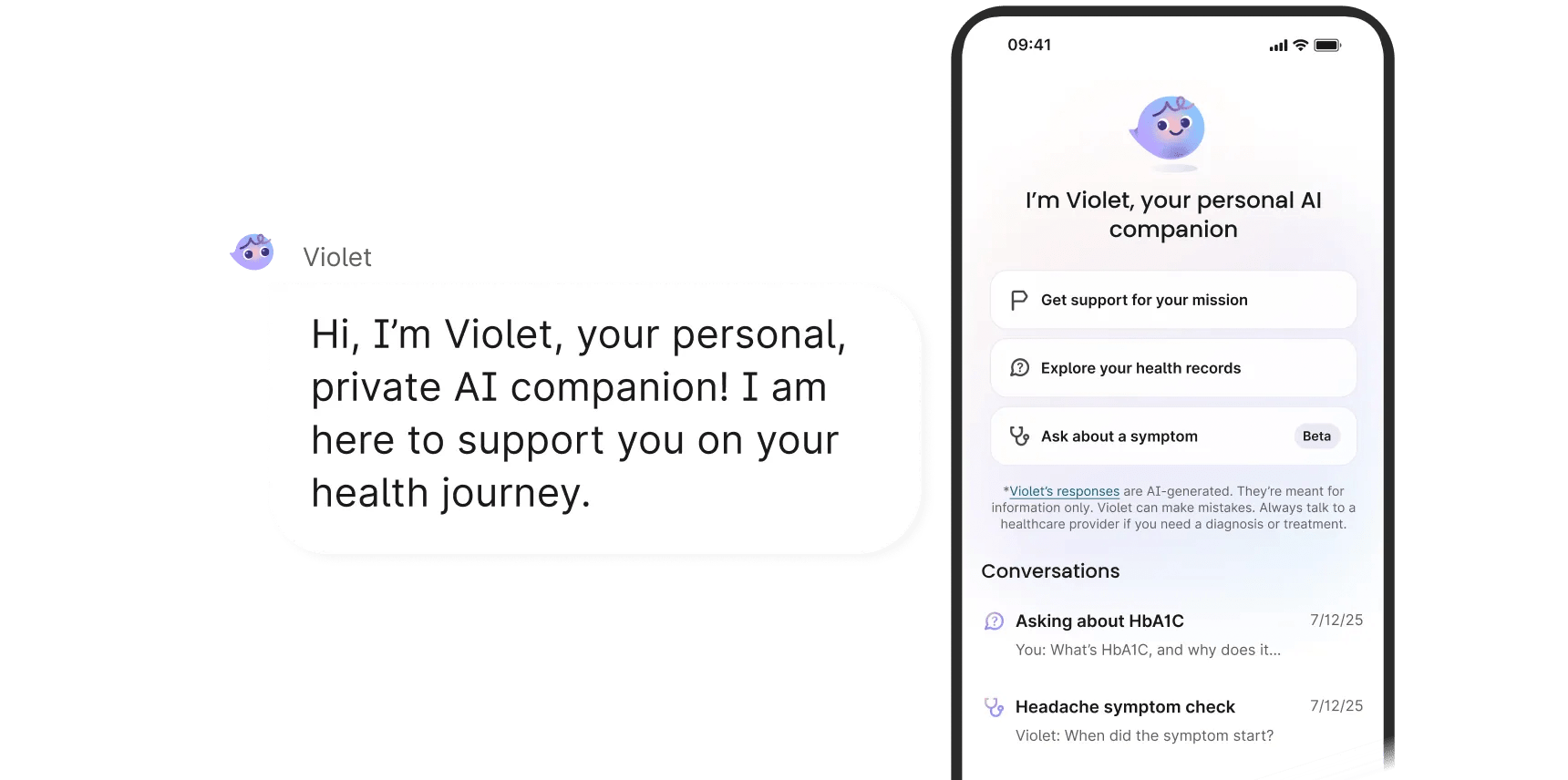

Closing gaps in care requires a purpose-built approach. As both a care and technology company, Verily’s clinical, scientific, data science, and engineering teams partnered together to develop new approaches to symptom assessment and education tools that modeled validated clinical protocols and the ‘in-clinic’ process. Verily recently introduced Violet, a personal, private AI companion accessible through the Verily Me app. With Violet at their fingertips, anyone using Verily Me can better understand their health history, prepare for doctor visits, and get questions answered about symptoms.

Behind Violet sit a number of specialized healthcare AI agents. One of these multi-agent architectures was developed to guide users in their symptom assessment, to provide self-care recommendations, and give advice on when to see a healthcare professional. This clinically grounded approach — designed and modeled after the ‘in clinic’ process — preserves patient context, and supports individuals with clear, helpful information.

The clinical logic behind the AI companion in Verily Me

Within the Verily Me app, specialized healthcare AI agents sit behind Violet’s interface to address complex health tasks like assessing symptom context, based on the following principles:

Active investigation (evaluate, don't just listen). Patients know how they feel, but they may not realize which details are medically important. Through active investigation, agents prompt the user with relevant questions to gather deeper context and detail, and other agents actively evaluate the multi-turn conversation. The model leverages available medical records, not just what a patient verbally mentions.

Extracting more accurate results from messy data. Medical data is often messy and inconsistently represented. While standard AI models try to process all this raw information — mistakes and all — Verily believes fine tuning and organizing data can result in more accurate results. Within Verily Me’s secure and private environment, anyone using Verily Me can ask health questions that can be modeled on their fine tuned health dataset.

High-confidence reasoning. The system's process is made more transparent and predictable. Instead of accepting a final answer; models are trained to check their own reasoning and conclusions. This minimizes hallucination risks and helps to ensure that the final recommendations are rigorous, reliable, and that we can state to the user the rationale leading to the AI's conclusion and recommendation.

Inside Violet: How a team of AI agents work to ensure safety

Violet addresses the challenges of symptom assessment via a purpose-built design philosophy. Rather than asking a single AI to do everything at once — collect symptoms, check records, and generate recommendations in one conversational turn — we decompose the assessment into specialized components, each with a focused clinical responsibility.

It’s like a relay race instead of a solo sprint. Rather than a single agent trying to manage the whole process, a coordinated team handles specific tasks and passes the 'baton' of information smoothly to the next.

Our architecture is inspired by how triage actually works in an urgent care setting. When you walk into an urgent care clinic, you don't have a single conversation with one person who handles everything, and you don’t have to know what information is important to share.

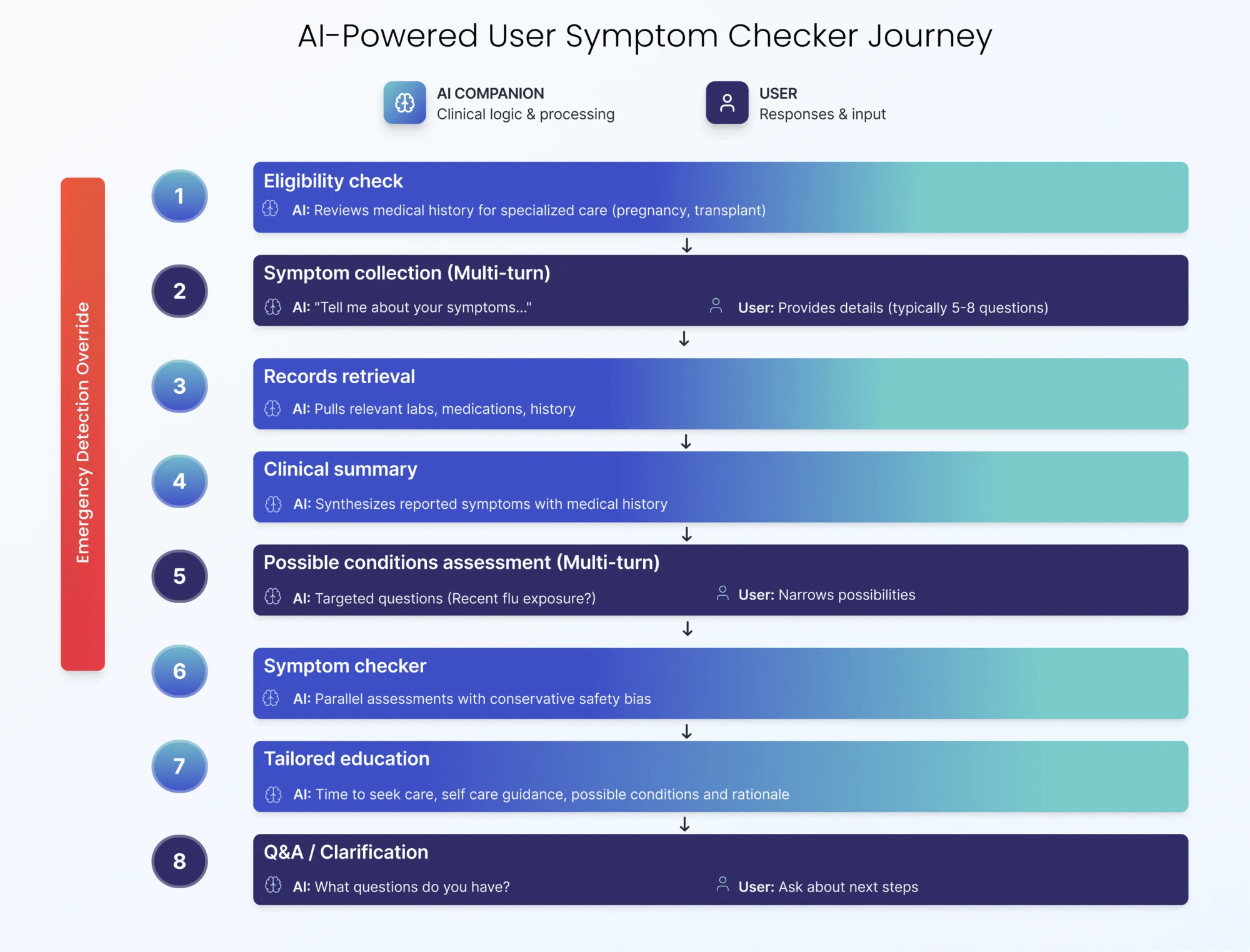

Violet’s patient journey: A coordinated sequence

When a patient asks Violet about a symptom, they progress through a carefully orchestrated architecture:

- Eligibility check: Reviews medical history for specialized care needs (e.g., pregnancy, transplant).

- Symptom collection (multi-turn): An agent asks "Tell me about your symptoms" and loops through clarifying questions.

- Records retrieval: Pulls relevant labs, medications, and history (bypassing the file-size limits of standard LLMs).

- Clinical summary: Synthesizes demographics, history, and labs.

- Possible conditions assessment: Narrows possibilities through targeted questions (e.g., "Is your cough dry or are you bringing up phlegm?").

- ‘What to do’ assessment: Runs parallel assessments with a conservative safety bias.

- Recommendations: Provides clear next steps (self-care, labs, time to seek care).

- Why we recommend this: Provides the agentic reasoning for the recommendation.

- Q&A: Allows the patient to ask clarifying questions.

Built for trust: The core safety systems of Violet

Our architecture is built on a safety-first mentality, ensuring checks occur continuously throughout the patient journey rather than at a single point of failure.

Multi-stage emergency detection: We don't wait until the end to flag danger. Emergencies are screened throughout symptom collection, after collection, and during the final assessment. If any stage detects a high acuity scenario, the system is built to immediately override the workflow to guide the patient to appropriate level of care when a high level of risk is detected.

Explicit eligibility screening: The system automatically screens for complex histories — such as pregnancy or active cancer, organ transplants, or severe autoimmune conditions — and guides patients to talk to their healthcare provider about their symptoms.

Predictable, structured outputs: Our agents are architected to deliver structured outputs based on clinical practice guidelines. Every assessment follows a strict schema: a clear recommendation, a list of possible conditions, specific self-care steps, guidance on when to see a doctor, and “why we recommend this” (the agentic reasoning). This format focuses on giving users helpful advice right away, while also showing users exactly how the AI reached its conclusion.

Human-centric design: To ensure the clinical rigor of the design translates into patient comprehension, we conducted user research and used user‑centered design with iterative prototyping. This allowed us to identify high‑value improvement areas, uncover beliefs and barriers to AI use, and measure sentiment around privacy and safety. We focused on reducing cognitive burden and "confusion-related" errors, and prioritized the key principles of transparency and empathy. We developed a linear, easy-to-answer flow that was designed for the needs of the intended user population in its intended environment.

Quality and model lifecycle: Verily’s Quality Management System (QMS) ensures strict adherence to regulatory and industry standards. Before release, every AI model must clear rigorous compliance controls; post-launch, we utilize formal change controls to vet and approve every update. This end-to-end lifecycle ensures that our technology remains safe, effective, and high-quality from initial design through retirement.

Addressing the output variability of LLMs with a design built for healthcare

To solve the output variability problem inherent in LLMs, we treat the model less like a chatbot and more like a medical board.

- Diverse reasoning personas: Each assessment has a different reasoning style, and collectively, they help cover a broad range of risks by considering multiple perspectives.

- Conservative consensus: We aggregate these opinions and apply a configurable "conservativeness score", calibrating the final recommendation to prioritize safety (e.g., favoring "see a doctor" over "self care" when in doubt).

Assessing safety: Violet's early performance in beta

Safety goes beyond design principles and architecture. It must be reinforced through clinically informed development, evaluation, and monitoring.

The Verily Me difference: Built for healthcare

This deep dive into our comprehensive approach highlights the critical difference between Verily Me and other AI tools currently available to ask questions about your health.

While general-purpose LLMs are powerful, Verily Me’s Violet, a coordinated set of healthcare AI agents, embeds individual steps in the clinical process to assess symptoms, much like a coordinated team at an urgent care clinic, and is delivering more personalized, context aware support for users even in its early days as a beta feature.

We’re just getting started — and we begin with an uncompromising commitment to user safety. By building a multi‑agent architecture with multi-stage emergency detection, we’re delivering a system grounded in clinical rigor and focused on patient well‑being.

And remember:

*Violet’s powerful, but she can’t treat or diagnose.

Violet is an AI-powered companion. She’s not human. Always consult a licensed healthcare provider for diagnosis or treatment.

Violet is developed in collaboration with medical experts and undergoes regular optimization to ensure high standards of accuracy and safety.

Violet can make mistakes. She bases her responses on the info you give her, your available health records, and training data. Errors or gaps in any of these sources could affect Violet’s responses.

Violet can only offer guidance for a limited set of situations, see verilyme.com for details.

Acknowledgement:

Special thanks to the Verily clinical, science, data science and AI, and product teams whose input made this work possible.