It’s time to welcome AI to the care team

Agentic AI for enterprise health care remains primarily in the back office rather than at the bedside. According to a recent American Medical Association survey, 81% of physicians now use AI in their practice, but mostly for administrative workflows, even though 76% see an advantage to using AI tools in patient care. AI is most commonly leveraged for summaries of medical research (39%), creation of discharge instructions and care plans (30%), and documentation of billing and chart summarization (28%); activities where the perceived risk is low and the potential cost savings are quantifiable. Only 17% of physicians reported use of AI for assistive diagnosis and the reported use for patient-facing recommendations was much lower. When it comes to delivering care in clinical settings, AI remains in the background.

This is in stark contrast to the widespread appetite among consumers to engage with AI directly on symptoms, possible diagnoses, and treatment options. According to a recent report published by OpenAI, 40 million+ Americans use ChatGPT daily to ask questions about healthcare. Even 30% of physicians think a majority of their patients are probably using AI for health related guidance. Patients are uploading their medical records, perhaps at risk to their privacy, to LLMs to ask clinical questions about their health. Increasingly, it is evident that consumers want to be able to ask a question, get an answer that is informed by their medical record, and take action according to clinical guidelines and best practice. They don’t want to wait to call, send a portal message, or make an appointment.

At the same time, the policy and regulatory environment shaping the use of AI in care delivery is increasingly favorable and quickly evolving. HHS has asked for public input on how to accelerate adoption and use of AI as part of clinical care, FDA has been working to clarify regulatory pathways for AI-enabled medical devices, and a national policy framework for AI has been announced to spur AI innovation across industry sectors.

Amidst all of this momentum, we have to ask ourselves: what is holding us back? What will it take for us to embrace the role of AI as part of the patient’s care team?

First, a shift in mindset

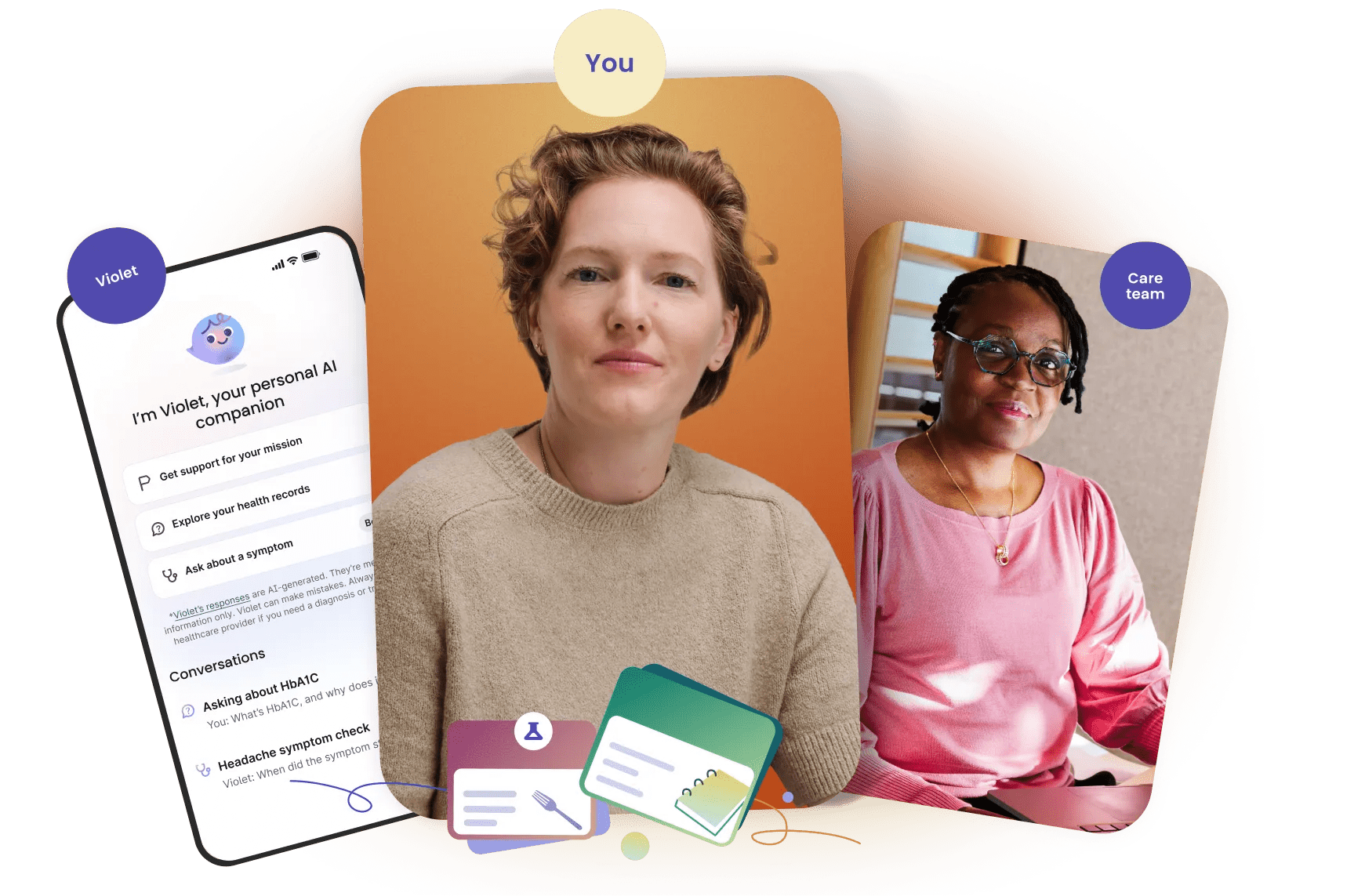

One way to think about AI is as a digital team member, handling a defined scope of practice and working alongside clinicians within defined boundaries.

The American Medical Association (AMA) defines scope of practice as “those activities that a person licensed to practice as a health professional is permitted to perform.” This framework allows for flexibility in how activities are conducted while outlining clear boundaries based on the individual's training, evaluation, and certifications. As an example, the scope for registered nurses includes monitoring patient vitals, administering medication, and discussing care plans, but it does not include diagnosis or prescribing medication. For physicians, the activities within and boundaries for their scope depends on their specialty. For example, pediatricians can diagnose and treat children for acute and chronic conditions but cannot perform surgery on children unless they have completed both a general surgery residency and pediatric surgery fellowship.

Under a scope of practice, human clinicians can practice with some degree of flexibility, without needing to give the exact same response to every similar patient, while also clearly understanding the limits as to what they can and cannot do and say to patients under their care. Similarly, AI tools functioning under a defined scope of practice could produce outputs that still mirror the appropriate variations necessary for standard of care. When a model output reaches the edge of that boundary, AI tools could invoke another role on the care team, either by escalating vertically up the team responsibilities, in the same way that Physician Assistants hand off complex diagnosis cases to MDs, or horizontally across the team, engaging a complementary role, such as when the patient needs medical explanations provided from a different perspective.

Second, what’s necessary to give AI a role

Defining the role of AI under the familiar scope of practice framework helps to conceptualize how AI could work as part of a patient’s care team. Yet, to make this a reality, there are many unanswered questions that will first require clarity. These include:

- Evaluation: While there is work underway to increase clarity around regulatory expectations for AI used in medical devices, the level and type of evidence available for a given AI tool can vary, particularly when the scope does not cross the risk thresholds for AI-enabled device software functions (like education or coaching). Without high quality evidence from evaluations, it will be challenging for clinicians and patients to adopt AI tools at scale and to compare the safety, security, accuracy, value, and return on investment. Alignment around national standards for credentialing or endorsement, like URAC’s Healthcare AI Accreditation or the DiMe Seal, could provide a foundational level of confidence in AI tools that are integrated into various aspects of patient care.

- Ongoing monitoring & improvement: Evaluation and growth opportunities for clinicians do not end when they pass medical boards or finish residency, working within a scope of practice is a lifelong learning process. Clinicians are subject to performance reviews and re-credentialing for hospital privileges and continuing education, self assessment, and improvement to maintain board certification. Similarly, we need common standards for how AI performance will be monitored over time as models learn and change.

- Reimbursement: Human clinicians bill for services they provide, but what if AI provides the service? How will activities like AI-delivered condition education or symptom consult be reimbursed? To spur adoption, we need to test different models that pay for digital technology and AI delivered care services.

- Workflow and data integration: Human care teams often function efficiently together but role integration has taken time and work. Integration of digital technologies into clinical care workflows is a longstanding challenge, and we need to continue developing and testing processes for integrating AI into patient care workflows and existing health IT systems to avoid further care fragmentation. Work to define data standards, data rights, and transparency requirements, along with real-world evidence of value, will serve as the foundation for effective workflow integration.

- Liability structures: Professional liability protection and medical malpractice is an integral part of care delivery. But because the use of AI in care involves multiple responsible actors - developers, clinicians, hospitals, many different solutions to liability have been considered. There is still much work to be done to establish robust, national liability frameworks for AI.

Our approach at Verily

At Verily, we are thinking intentionally about ways to help bring clarity to some of these unanswered questions. We consider the intended care scope for our AI agents, and how they might be used within a patient’s broader care paradigm, when establishing criteria for evaluation and ongoing monitoring and improvement.

Verily grounds the use of AI models in a foundation of safety and compliance, while also recognizing that individual AI models differ in risk, complexity, and regulatory requirements. As a result, each AI model is uniquely evaluated for its complexity, risk, and the appropriate regulatory and compliance path.

For example, for evaluation we employ a risk-based testing approach for our AI agents, matching the level of testing to the clinical risk and defined scope. While wellness and education use cases focus on rapid iteration based on user feedback, higher-risk use cases must pass formal validation standards and undergo clinician-led testing. Similar to how clinicians are evaluated and trained by more experienced professionals in apprenticeships or residency programs, the performance of our AI agents is evaluated by qualified healthcare professionals for safety and accuracy.

For ongoing monitoring and improvement, we are developing a monitoring system that uses automated alerts to flag potential safety concerns, model errors, or uses beyond the AI agent’s intended scope. We are building in direct pathways for automated and user-initiated escalation to human clinicians, similar to how nurse practitioners or medical residents have protocols for escalating to physician attendings when a task exceeds their defined scope or skill set. We also have plans to conduct periodic, formal audits of our AI models where we will re-evaluate the application against established test sets to proactively identify and address any performance degradation or "model drift" that may occur over time. In the similar way to how performance concerns with human clinicians are escalated to review boards and evaluated for disciplinary action, we also plan to escalate model performance issues to a cross-functional governance team for review. When needed, we intend to modify the model's guardrails, roll back to a previous version, or temporarily take the agent offline to ensure user safety.

Ultimately, we believe patient care can be both clinician-led and AI-forward, with appropriate workflows and engagements driven by technology. As the broader industry begins to clarify national standards and frameworks for evaluation, monitoring, reimbursement, workflow integration, and accountability structures, a future where AI functions as part of a patient’s care team is in reach.

*The information contained on this page is intended to outline Verily’s general product direction and is not a commitment or legal obligation to deliver any functionality. Product capabilities, timeframes and features are subject to change and should not be viewed as commitments.